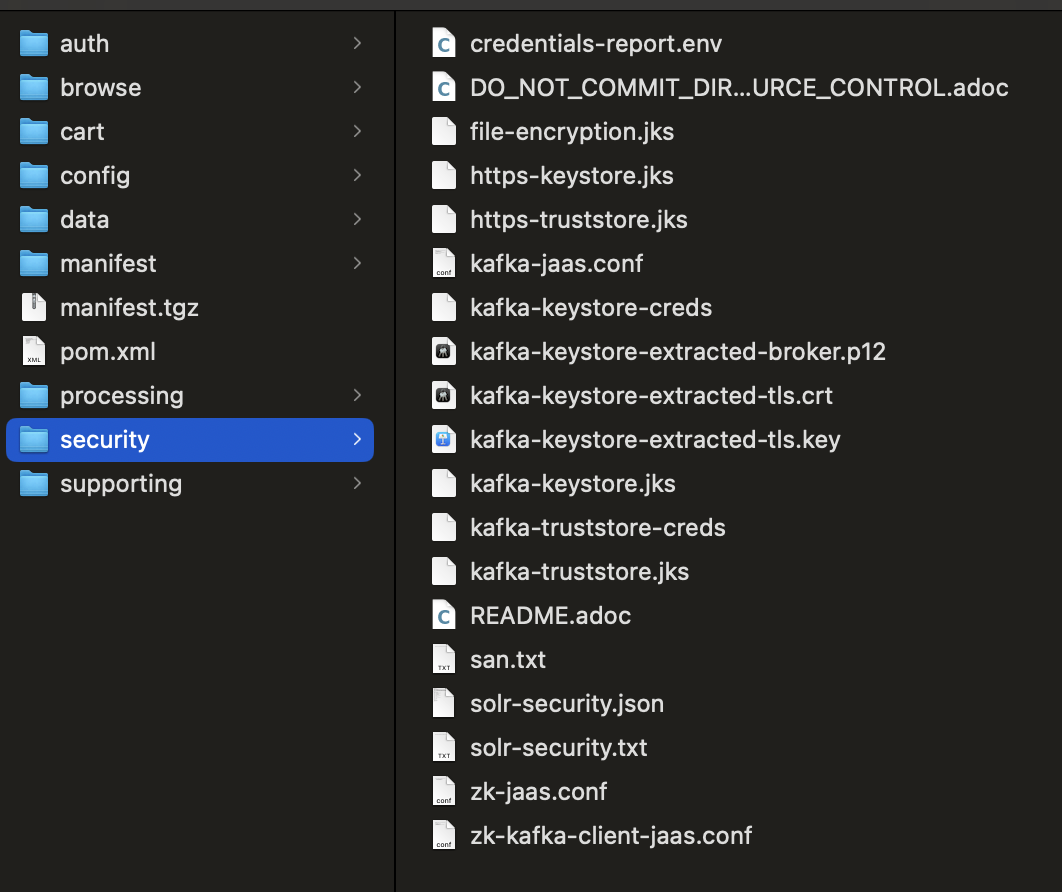

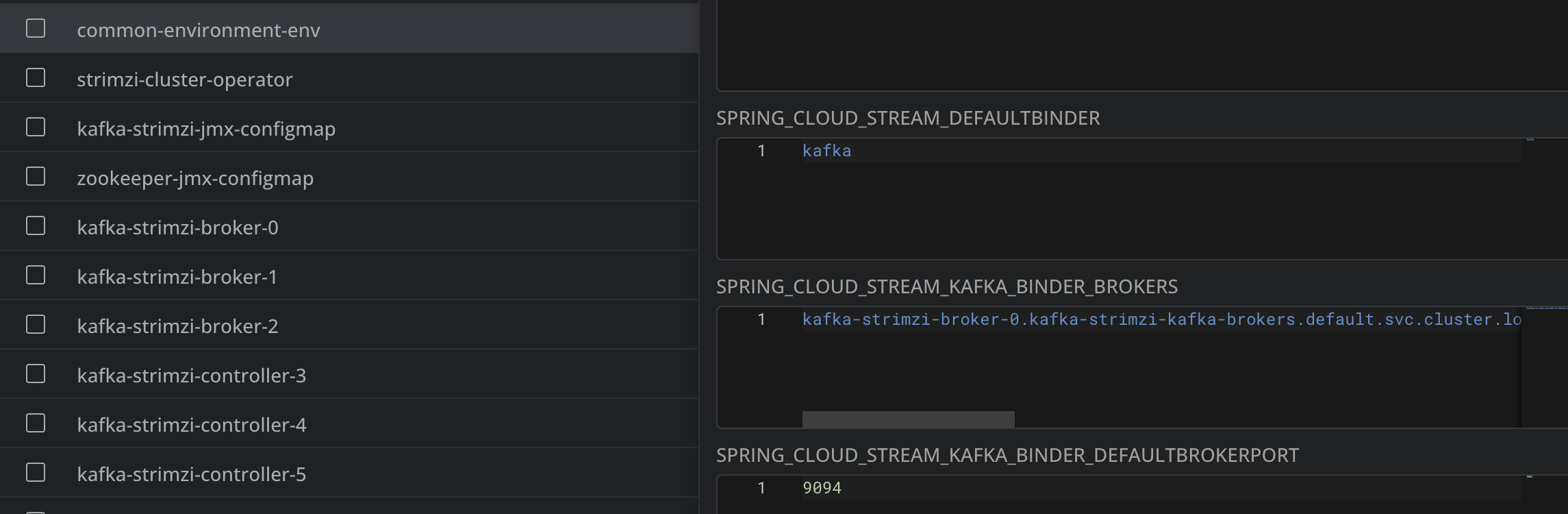

- name: broker <---- new component enabled platform: kafka-kraft-broker descriptor: snippets/docker-kafka-kraft-broker.yml enabled: true ... - name: kafka-kraft-controller <---- new component enabled platform: kafka-kraft-controller descriptor: snippets/docker-kafka-kraft-controller.yml enabled: true ... - name: broker <---- old component disabled platform: kafka descriptor: snippets/docker-kafka.yml enabled: false

- v1.0.0-latest-prod